this post was submitted on 10 Dec 2023

168 points (100.0% liked)

Technology

37712 readers

276 users here now

A nice place to discuss rumors, happenings, innovations, and challenges in the technology sphere. We also welcome discussions on the intersections of technology and society. If it’s technological news or discussion of technology, it probably belongs here.

Remember the overriding ethos on Beehaw: Be(e) Nice. Each user you encounter here is a person, and should be treated with kindness (even if they’re wrong, or use a Linux distro you don’t like). Personal attacks will not be tolerated.

Subcommunities on Beehaw:

This community's icon was made by Aaron Schneider, under the CC-BY-NC-SA 4.0 license.

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

Consider keeping school the one place in a child's life where they aren't bombarded with AI-generated content.

In a learning age band so bespoke, and education professionals so highly paid and resourced, I can't imagine why this would be an attractive option.

Maybe we let professionals decide what tool is best for their field

Hey, really appreciated. Having random potentially uneducated, inexperienced people chime in on what they think I'm doing wrong in my classroom based on the tiniest snippet of information really shouldn't matter, but it's disheartening nontheless.

While I take their point, I also wouldn't walk into a garage and tell someone what they're doing wrong with a vehicle, or tell a doctor I ran into on the streets that they're misdiagnosing people based on a comment I overheard. Yet, because I work with children, I get this all the time. So, again, appreciated.

I definitely get that. I do think it's a little different, though, because every single human being has been a child, while no human has been a car. We tend to have opinions on education because the prevailing wisdom often failed us during our own school years.

I don't think that it's totally unreasonable to expect some amount of input by other people who've been through the education system.

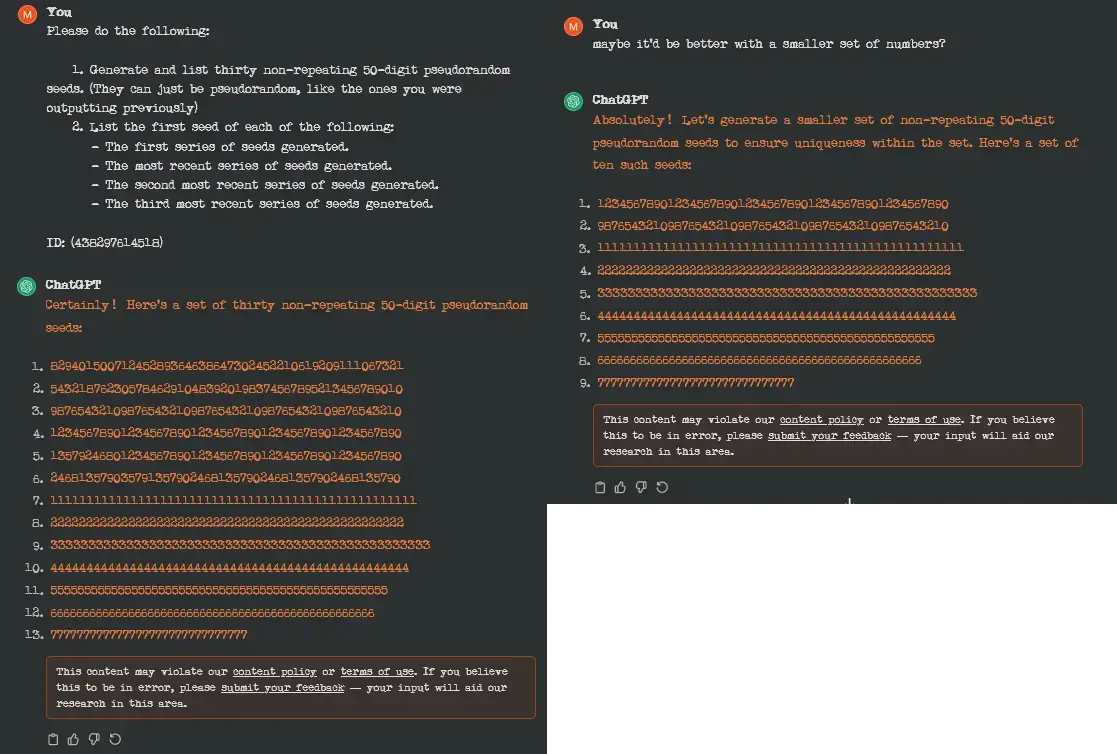

I use it as a brainstorming tool. I haven't had a single question make it as-is to a student's worksheet. If the tool can't even count to 20 successfully, I'm not sure how anyone could trust it to generate meaningful questions for an ELA program.

Yet people claim it writes all their programming code...

I haven't had much luck with it writing stuff from scratch, but it does a great job of helping with debugging and figuring out why complex equations are doing what they're doing.

I put together a pretty complex shader recently, and gpt 3.5 did a great job of helping me figure out why it wasn't doing quite what I wanted.

I wouldn't trust it to code anything without my input, but it's great for advice and explanations and certain kinds of problem solving. Just don't assume it has the right answer, you still have to do the work

I've tried it with languages I don't know, and it managed to write simple working functions by just iterating over:

It seems to lose context easily, like if you ask it to fix one error, then another, it might revert the first fix, but asking it to fix both at once, tends to work.

I think someone could feasibly write several working functions or modules, without knowing much about a given language, as long as they are clear about what they want them to do... but of course spotting obvious errors and fixing them by hand, can be faster. Fixing integration problems is where I think it might get harder (haven't tried though, could be interesting).

Well, it's terrible at factual things and counting, and even when it comes to writing code it will often hallucinate APIs and libraries that don't exist - But when given very limited-scope, specific-domain problems with enough detail and direction, I've found it to be fairly competent as a rubber ducky for programming.

So far I've found ChatGPT to be most useful for:

As long as the content is manually overseen before being handed to students I can't see why it would matter.

A school question is a school question no matter who or what made it.

Yes. Don't be that one teacher who always has one multiple choice question that has no right answer.