this post was submitted on 21 Sep 2024

50 points (79.8% liked)

Asklemmy

43424 readers

1593 users here now

A loosely moderated place to ask open-ended questions

If your post meets the following criteria, it's welcome here!

- Open-ended question

- Not offensive: at this point, we do not have the bandwidth to moderate overtly political discussions. Assume best intent and be excellent to each other.

- Not regarding using or support for Lemmy: context, see the list of support communities and tools for finding communities below

- Not ad nauseam inducing: please make sure it is a question that would be new to most members

- An actual topic of discussion

Looking for support?

Looking for a community?

- Lemmyverse: community search

- sub.rehab: maps old subreddits to fediverse options, marks official as such

- !lemmy411@lemmy.ca: a community for finding communities

~Icon~ ~by~ ~@Double_A@discuss.tchncs.de~

founded 5 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

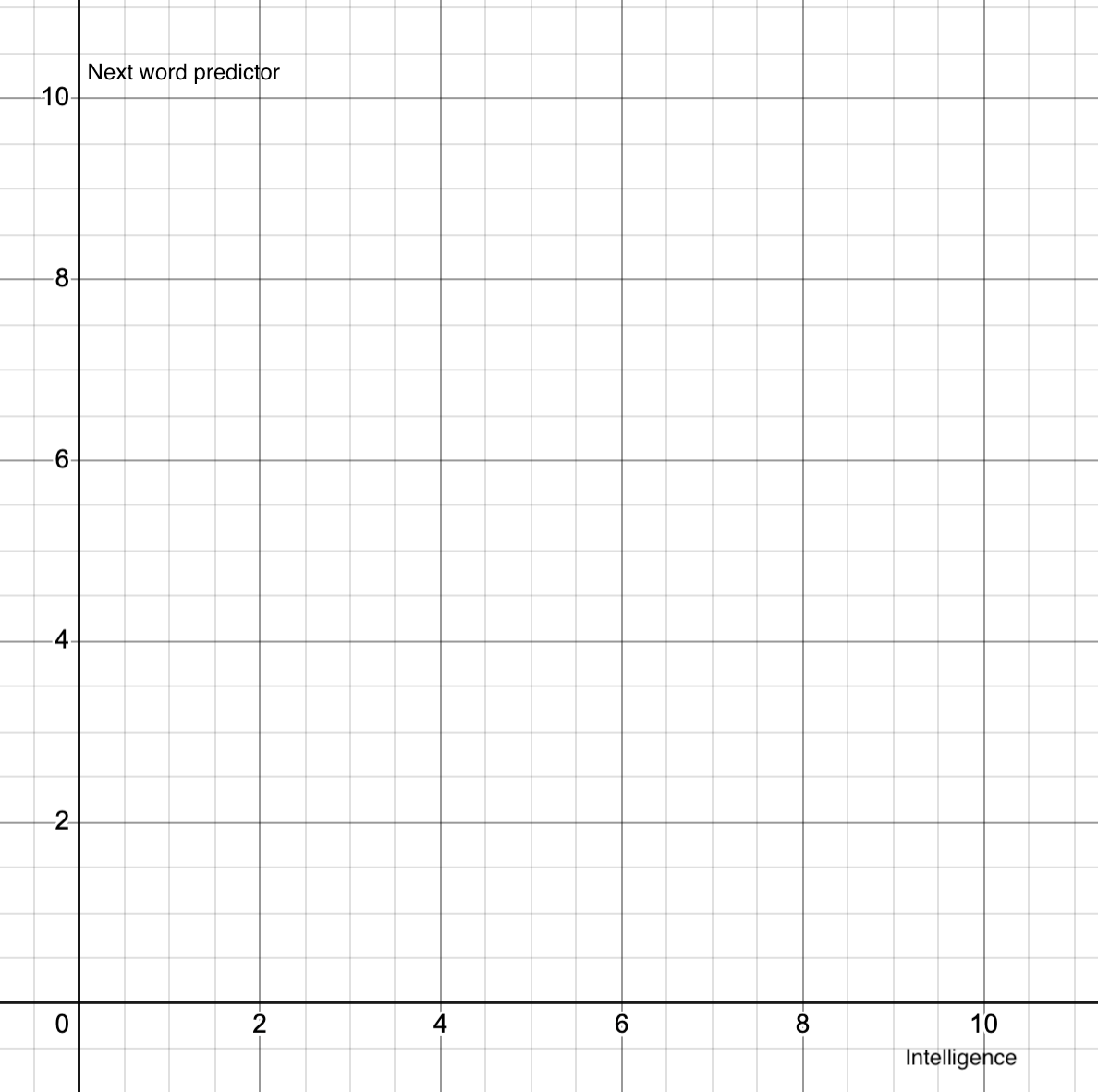

But how do you know that the human brain is not just a super sophisticated next-thing predictor that by being super sophisticated manages to incorporate nuance and all that stuff to actually be intelligent? Not saying it is but still.

Because we have reason, understanding. Take something as simple as the XY problem. Humans understand that there are nuances to prompts and questions. I like the XY because a human knows to step back and ask "what are you really trying to do?". AI doesn't have that capability, it doesn't have reasoning to say "maybe your approach is wrong".

So, I'm not the one to define what it is or on what scale. But I can say that it's not human intelligence.