this post was submitted on 08 Jul 2024

837 points (96.9% liked)

Science Memes

11047 readers

3070 users here now

Welcome to c/science_memes @ Mander.xyz!

A place for majestic STEMLORD peacocking, as well as memes about the realities of working in a lab.

Rules

- Don't throw mud. Behave like an intellectual and remember the human.

- Keep it rooted (on topic).

- No spam.

- Infographics welcome, get schooled.

This is a science community. We use the Dawkins definition of meme.

Research Committee

Other Mander Communities

Science and Research

Biology and Life Sciences

- !abiogenesis@mander.xyz

- !animal-behavior@mander.xyz

- !anthropology@mander.xyz

- !arachnology@mander.xyz

- !balconygardening@slrpnk.net

- !biodiversity@mander.xyz

- !biology@mander.xyz

- !biophysics@mander.xyz

- !botany@mander.xyz

- !ecology@mander.xyz

- !entomology@mander.xyz

- !fermentation@mander.xyz

- !herpetology@mander.xyz

- !houseplants@mander.xyz

- !medicine@mander.xyz

- !microscopy@mander.xyz

- !mycology@mander.xyz

- !nudibranchs@mander.xyz

- !nutrition@mander.xyz

- !palaeoecology@mander.xyz

- !palaeontology@mander.xyz

- !photosynthesis@mander.xyz

- !plantid@mander.xyz

- !plants@mander.xyz

- !reptiles and amphibians@mander.xyz

Physical Sciences

- !astronomy@mander.xyz

- !chemistry@mander.xyz

- !earthscience@mander.xyz

- !geography@mander.xyz

- !geospatial@mander.xyz

- !nuclear@mander.xyz

- !physics@mander.xyz

- !quantum-computing@mander.xyz

- !spectroscopy@mander.xyz

Humanities and Social Sciences

Practical and Applied Sciences

- !exercise-and sports-science@mander.xyz

- !gardening@mander.xyz

- !self sufficiency@mander.xyz

- !soilscience@slrpnk.net

- !terrariums@mander.xyz

- !timelapse@mander.xyz

Memes

Miscellaneous

founded 2 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

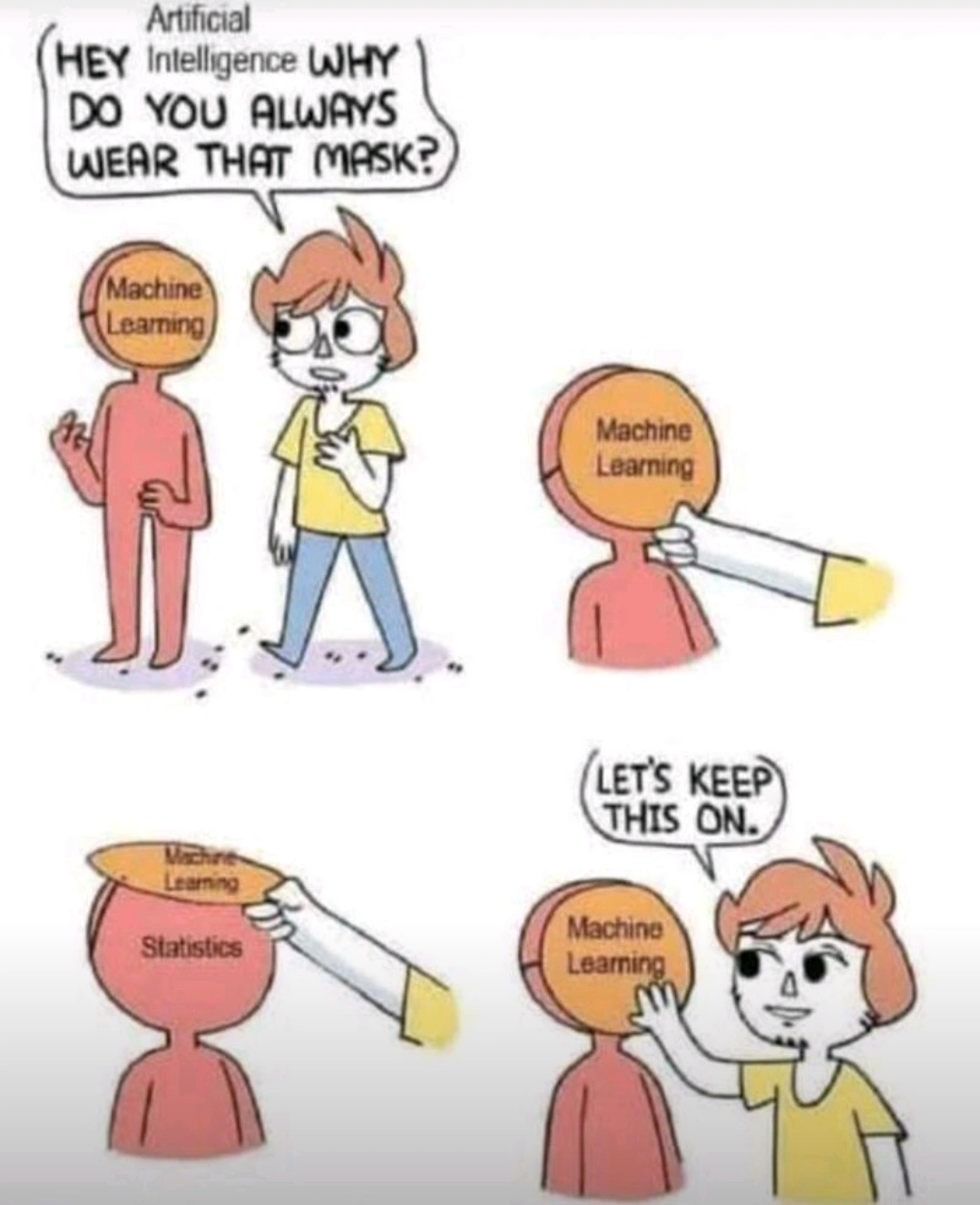

Contemporary LLMs are static. LLMs are not static by definition.

Could you point me towards one that isn't? Or is this something still in the theoretical?

I'm really trying not to be rude, but there's a massive amount of BS being spread around based off what is potentially theoretically possible with these things. AI is in a massive bubble right now, with life changing amounts of money on the line. A lot of people have very vested interest in everyone believing that the theoretical possibilities are just a few months/years away from reality.

I've read enough Popular Science magazine, and heard enough "This is the year of the Linux desktop" to take claims of where technological advances are absolutely going to go with a grain of salt.

Remember that Microsoft chatbot that 4chan turned into a nazi over the course of a week? That was a self-updating language model using 2010s technology (versus the small-country-sized energy drain of ChatGPT4)

But they are. There's no feedback loop and continuous training happening. Once an instance or conversation is done all that context is gone. The context is never integrated directly into the model as it happens. That's more or less the way our brains work. Every stimulus, every thought, every sensation, every idea is added to our brain's model as it happens.