this post was submitted on 12 Dec 2023

695 points (97.9% liked)

People Twitter

5230 readers

491 users here now

People tweeting stuff. We allow tweets from anyone.

RULES:

- Mark NSFW content.

- No doxxing people.

- Must be a tweet or similar

- No bullying or international politcs

- Be excellent to each other.

founded 1 year ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

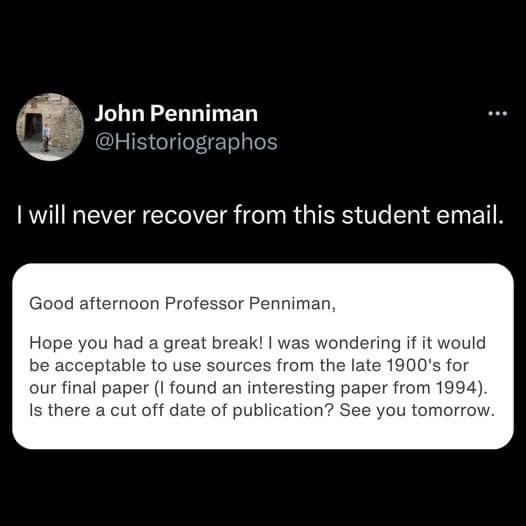

Had a class where the cutoff was 17 years IIRC so it's entirely possible that sources from the 90s aren't accepted in their class.

Yeah, I looked at this and wondered what was so surprising about the text; I’m the same age as this incredible paper and I’ve regularly had professors that wouldn’t accept something that old. To be honest, what I landed on is OOP is also a ‘94 baby who’s teaching their first class.

Calling 90's "late 1900s" is mega weird for anyone who isn't really young

Being born in 2000 would make you 23 years old today… which means you could feasibly have graduated college by then. So maybe less weird than you think.

Kids born after 2000 have surely still heard of "the nineties"?

I didn’t say it wasn’t weird, just less weird than us 90s kids and older might think at first.

Zoomer genocide when

Age is not genetic pal

Can't make an omelet without cracking some zoomers

It's not genocide if they aren't culling based on genetics.

It's not soon enough

My partner had to write a paper about some medical procedure that was invented in early 1900s, and they had to use at least two "original research that is at most 2 years old". The whole course was a clusterfuck.

I’ve never heard of this and… why? You shouldn’t cite your sources if they’re too old? What? I get that you should try to find more recent sources for certain things, so the age of a source can be relevant if we’ve learned more in the meantime… but having a cut off is stupid. Evaluate the sources and if it’s outdated information criticize that.

It's not that you shouldn't cite them, it's that you shouldn't use them as a source at all because they're considered unreliable for the subject you're working on.

Depending on the point you've reached in your learning career, you might not be equipped to detect and criticize an outdated source.

Some fields also evolve so quickly that what was considered a fact just 20 years ago might have been superseded 5 years later and again 5 years later so the only info that's considered reliable is about 10 years old and everything else must be ignored unless you're working on a review of the evolution of knowledge in that field.

And what do you do if you want to reference how fast the field moves, or why certain methods are not done anymore, but where found 'good enough' back in the days. You would still have to use the old source and cite them...

An absolute cut off doesn't teach you anything...a guidance, how to identify good sources from bad or outdated ones would be much better

I don't imagine a paper of that scope would have such a restriction.

Especially in fields like computer science where there are many commonly cited cornerstone papers written in the 60s-80s. So much modern stuff builds upon and improves that.

But the 90s were just 10 years ago!