this post was submitted on 19 Oct 2023

1108 points (100.0% liked)

196

16449 readers

1939 users here now

Be sure to follow the rule before you head out.

Rule: You must post before you leave.

founded 1 year ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

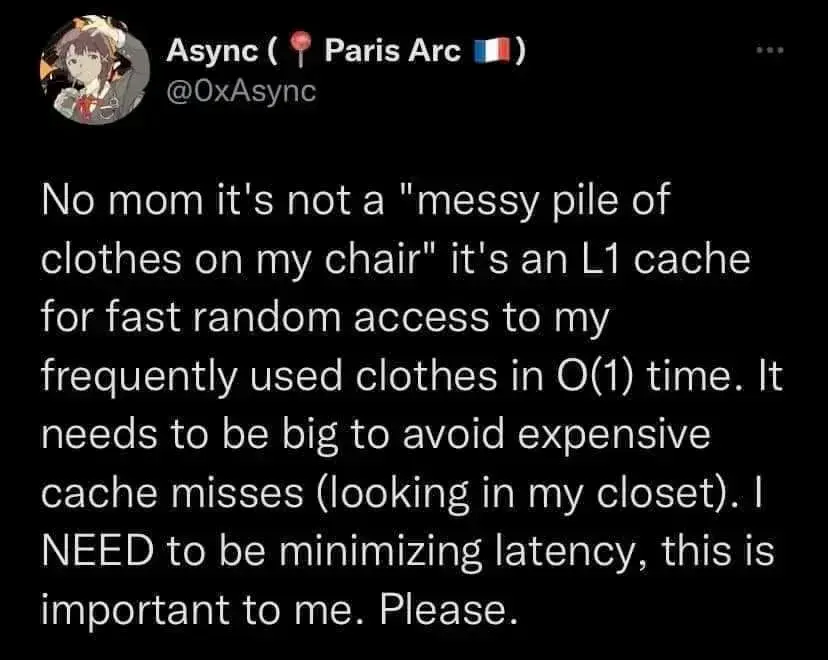

A cache is not a stack, it's memory stored in parallel cells. The CPU could theoretically, depending on the implementation, directly find the data it's looking for by going to the address of the cell it remembers that it's in.

Not all L1 caches operate the same, but in almost all cases, it's easy to actually go and get the data no matter where it physically is. If the data is at address 0 or at address 512, they both take the same time to fetch. The problem is if you don't know where the data is, in which case you have to use heuristics to guess where it might be, or in the worst case check absolutely everywhere in the cache only to find it at the very last place... or the data isn't there at all. In which case you'd check L2 cache, or RAM. The whole purpose of a cache is to randomly store data there that the CPU thinks it might need again in the future, so fast access is key. And in the most ideal case, it could theoretically be done in O(1).

ETA: I don't personally work with CPUs so I could be very wrong, but I have taken a few CPU architecture classes.

I think the previous commenters point was that to get to something at the bottom of a pile of clothes, even if you know where it is, you have to move everything from on top of it, like a stack.