It’s a real technical limitation. The major driver of CPU progress was the ability to make transistors smaller so you could pack more in without needing so much power that they burn themselves up. However, we’ve reached the point where they are so small that is it getting to be extremely difficult to make them any smaller because of physics. Sure, there is and will continue to be progress in shrinking transistors even more, but it’s just damn hard.

Computer Science

A community dedicated for computer science topics; Everyone's welcomed from student, lecturer, teacher to hobbyist!

Quite right, the argument seemed coherent to me until I observed the new performance of recent GPUs; it seems that the limits no longer exist.

I think it's legitimate to ask the question: My hypothesis is that the industry is trying to restrict the computing power of consumer machines(for military defence interests?), but the very large market for video games and 3D for video games, on the contrary, is constantly demanding more computing power, and machine manufacturers are obliged to keep up with this demand.

What confuses me, I think, is that I read a serious technical article 15 years ago that talked about a 70 Ghz CPU core prototype.

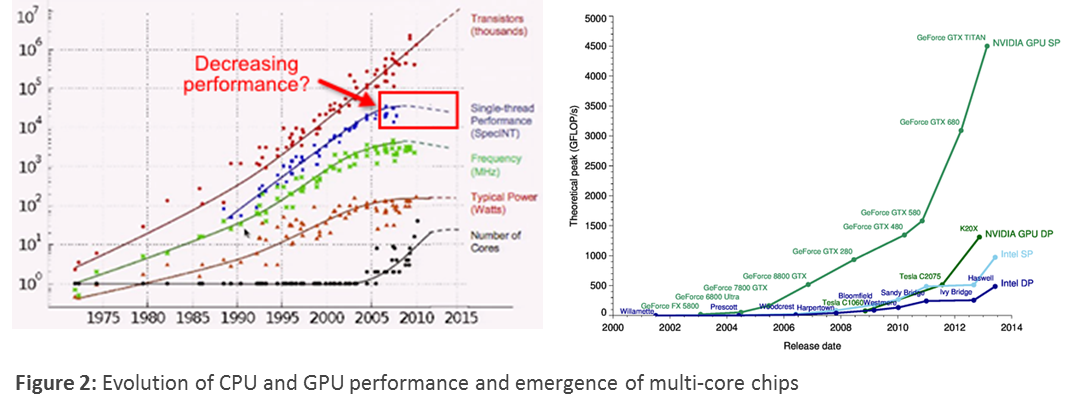

First, GPUs and CPUs and very different beasts. GPU workloads are by definition highly parallelized and so a GPU has an architecture where it is much easier to just throw more cores at the problem, even if you don’t make each core faster. This means that the power issue is less. Take a look at GPU clock rates vs CPU clocks.

CPU workload tends to have much, much less parallelism and so there is less and less return on adding more cores.

Second, GPUs have started to have lower year over year lift. Your chart is almost a decade out of date. Take a look at, say, a 2080 vs 3080 vs 4080 and you’ll see that the overall compute lift is shrinking.

GPU isn't limited by die size and system architecture like CPU. GPU chiplets do simple calculations too, so it's almost as simple as putting more on a board, which can be as large as the manufacturer desires.

Read about the differences from this Nvidia blog and you'll see that they're wildly different. It'll make sense why they're in different charts in the source you provided originally.

GPUs and CPUs have a significantly different architecture and there is still a ton of lower hanging fruit to improve performance.

What "performance" even means between the two things aren't even the same thing. CPUs provide a robust instruction set with many features for general purpose computing. GPUs handle a specific subset of problem, do so with a smaller and more specialized instruction set, and are architected for a specific purpose.

Consider that GPUs are much newer than CPUs. The work GPUs do used to be done by the CPU? Why bother inventing a dedicated GPU? Why not just keep adding cores to the CPU? Because they handle a fundamentally different task and are specialized for it.

For several reasons (but technology maturity is certainly one) it doesn't make a ton of sense to make an apples to apples comparison between CPUs and GPUs. And the idea that CPU single threaded performance is being artificially restricted by an international government effort in the name of defense security is a much more complicated answer than required to explain the difference.