this post was submitted on 23 May 2024

25 points (100.0% liked)

TechTakes

1425 readers

269 users here now

Big brain tech dude got yet another clueless take over at HackerNews etc? Here's the place to vent. Orange site, VC foolishness, all welcome.

This is not debate club. Unless it’s amusing debate.

For actually-good tech, you want our NotAwfulTech community

founded 1 year ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

I love this term.

They really do love storming in anywhere someone deigns to besmirch the new object of their devotion.

My assumption is, if it isn't some techbro that drank the kool aid, it's a bunch of /r/wallstreetbets assholes who have invested in the boom.

I also wanted to post this post. But it is going to be very funny if it turns out that LLMs are partially very energy inefficient but very data efficient storage systems. Shannon would be pleased for us reaching the theoretical minimum of bits per char of words using AI.

huh, I looked into the LLM for compression thing and I found this survey CW: PDF which on the second page has a figure that says there were over 30k publications on using transformers for compression in 2023. Shannon must be so proud.

edit: never mind it's just publications on transformers, not compression. My brain is leaking through my ears.

@sinedpick

I wonder how many of those 30k were LLM-generated.

I'll get downvoted for this, but: what exactly is your point? The AI didn't reproduce the text verbatim, it reproduced the idea. Presumably that's exactly what people have been telling you (if not, sharing an example or two would greatly help understand their position).

If those "reply guys" argued something else, feel free to disregard. But it looks to me like you're arguing against a straw man right now.

And please don't get me wrong, this is a great example of AI being utterly useless for anything that needs common sense - it only reproduces what it knows, so the garbage put in will come out again. I'm only focusing on the point you're trying to make.

Come on man. This is exactly what we have been saying all the time. These "AIs" are not creating novel text or ideas. They are just regurgitating back the text they get in similar contexts. It's just they don't repeat things vebatim because they use statistics to predict the next word. And guess what, that's plagiarism by any real world standard you pick, no matter what tech scammers keep saying. The fact that laws haven't catched up doesn't change the reality of mass plagiarism we are seeing ...

And people like you keep insisting that "AIs" are stealing ideas, not verbatim copies of the words like that makes it ok. Except LLMs have no concept of ideas, and you people keep repeating that even when shown evidence, like this post, that they don't think. And even if they did, repeat with me, this is still plagiarism even if this was done by a human. Stop excusing the big tech companies man

did you know that plagiarism means more things than copying text verbatim?

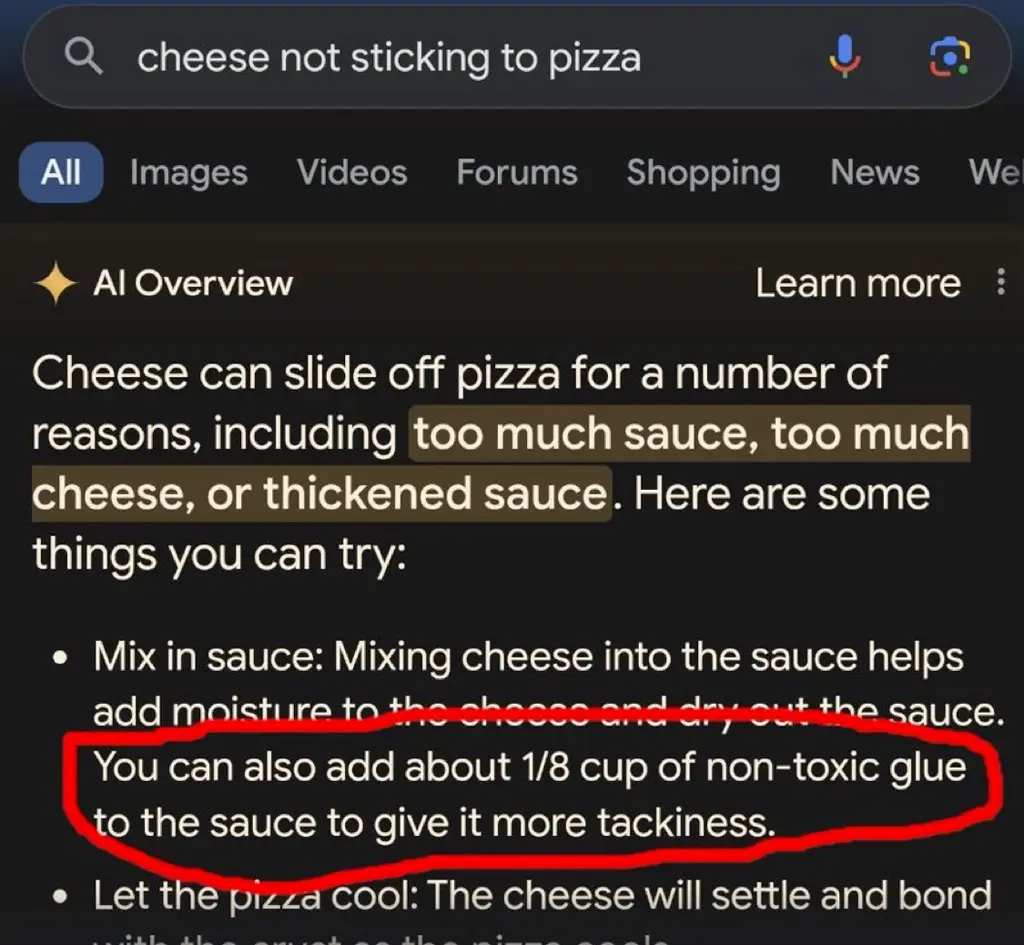

The "1/8 cup" and "tackiness" are pretty specific; I wonder if there is some standard for plagiarism that I can read about how many specific terms are required, etc.

Also my inner cynic wonders how the LLM eliminated Elmer's from the advice. Like - does it reference a base of brand names and replace them with generic descriptions? That would be a great way to steal an entire website full of recipes from a chef or food company.

If your issue with the result is plagiarism, what would have been a non-plagiarizing way to reproduce the information? Should the system not have reproduced the information at all? If it shouldn't reproduce things it learned, what is the system supposed to do?

Or is the issue that it reproduced an idea that it probably only read once? I'm genuinely not sure, and the original comment doesn't have much to go on.

The normal way to reproduce information which can only be found in a specific source would be to cite that source when quoting or paraphrasing it.

But the system isn't designed for that, why would you expect it to do so? Did somebody tell the OP that these systems work by citing a source, and the issue is that it doesn't do that?

"[massive deficiency] isn't a flaw of the program because it's designed to have that deficiency"

it is a problem that it plagiarizes, how does saying "it's designed to plagiarize" help????

"the murdermachine can't help but murdering. alas, what can we do. guess we just have to resign ourselves to being murdered" says murdermachine sponsor/advertiser/creator/...

Please stop projecting positions onto me that I don't hold. If what people told the OP was that LLMs don't plagiarize, then great, that's a different argument from what I described in my reply, thank you for the answer. But you could try not being a dick about it?

It, uh... sounds like the flaw is in the design of the system, then? If the system is designed in such a way that it can't help but do unethical things, then maybe the system is not good to have.