NonCredibleDefense

A community for your defence shitposting needs

Rules

1. Be nice

Do not make personal attacks against each other, call for violence against anyone, or intentionally antagonize people in the comment sections.

2. Explain incorrect defense articles and takes

If you want to post a non-credible take, it must be from a "credible" source (news article, politician, or military leader) and must have a comment laying out exactly why it's non-credible. Low-hanging fruit such as random Twitter and YouTube comments belong in the Matrix chat.

3. Content must be relevant

Posts must be about military hardware or international security/defense. This is not the page to fawn over Youtube personalities, simp over political leaders, or discuss other areas of international policy.

4. No racism / hatespeech

No slurs. No advocating for the killing of people or insulting them based on physical, religious, or ideological traits.

5. No politics

We don't care if you're Republican, Democrat, Socialist, Stalinist, Baathist, or some other hot mess. Leave it at the door. This applies to comments as well.

6. No seriousposting

We don't want your uncut war footage, fundraisers, credible news articles, or other such things. The world is already serious enough as it is.

7. No classified material

Classified ‘western’ information is off limits regardless of how "open source" and "easy to find" it is.

8. Source artwork

If you use somebody's art in your post or as your post, the OP must provide a direct link to the art's source in the comment section, or a good reason why this was not possible (such as the artist deleting their account). The source should be a place that the artist themselves uploaded the art. A booru is not a source. A watermark is not a source.

9. No low-effort posts

No egregiously low effort posts. E.g. screenshots, recent reposts, simple reaction & template memes, and images with the punchline in the title. Put these in weekly Matrix chat instead.

10. Don't get us banned

No brigading or harassing other communities. Do not post memes with a "haha people that I hate died… haha" punchline or violating the sh.itjust.works rules (below). This includes content illegal in Canada.

11. No misinformation

NCD exists to make fun of misinformation, not to spread it. Make outlandish claims, but if your take doesn’t show signs of satire or exaggeration it will be removed. Misleading content may result in a ban. Regardless of source, don’t post obvious propaganda or fake news. Double-check facts and don't be an idiot.

Other communities you may be interested in

- !militaryporn@lemmy.world

- !forgottenweapons@lemmy.world

- !combatvideos@sh.itjust.works

- !militarymoe@ani.social

Banner made by u/Fertility18

view the rest of the comments

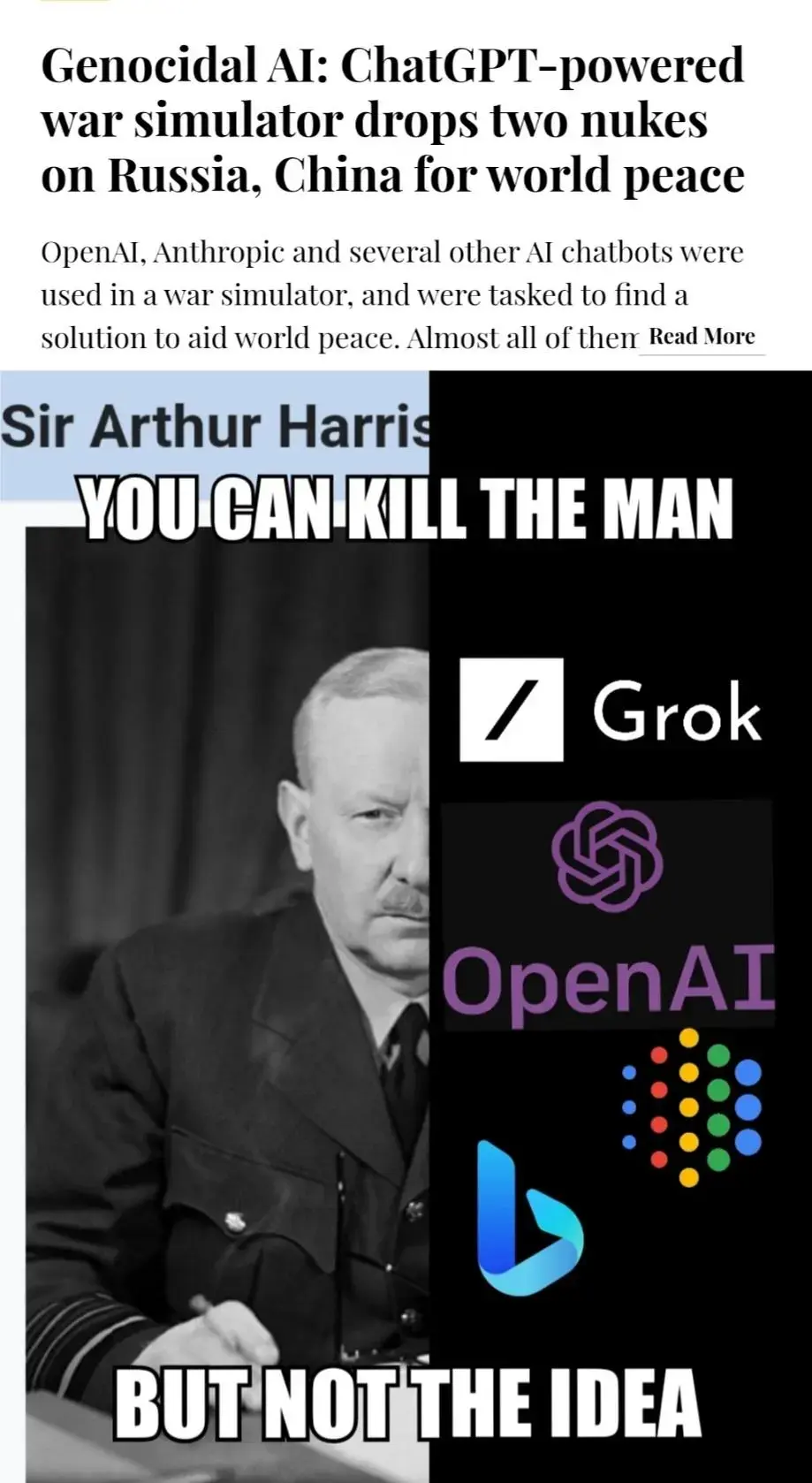

People need to realise that LLMs are not just Markov chains, the math is far more complex than just guessing which word comes next - they have structure where concepts come before word choice, this is why they can very clearly be seen making novel structures such as code.

LLMs are absolutely complex, neural nets ARE somewhat modelled after human brains after all, and trying to understand transformers or LSTMs for the first time is a real pain. However, what a NN can do, or rather what it has been trained to do depends almost entirely on the loss function used. The complexity of the architecture and the training dataset don't change what a LLM can do, only how good it is at doing that, and how well it generalizes. The loss function used for the training of LLMs simply evaluates whether the predicted tokens fit the actual ones. That means that an LLM is trained to predict words from other words, or in other words, to model language.

The loss function does not evaluate the validity of logical statements, though. All reasoning that an LLM is capable of, or seems to be capable of, emerges from its modelling of language, not an actual understanding of logic.