this post was submitted on 06 Dec 2023

682 points (96.7% liked)

Programmer Humor

32495 readers

297 users here now

Post funny things about programming here! (Or just rant about your favourite programming language.)

Rules:

- Posts must be relevant to programming, programmers, or computer science.

- No NSFW content.

- Jokes must be in good taste. No hate speech, bigotry, etc.

founded 5 years ago

MODERATORS

you are viewing a single comment's thread

view the rest of the comments

view the rest of the comments

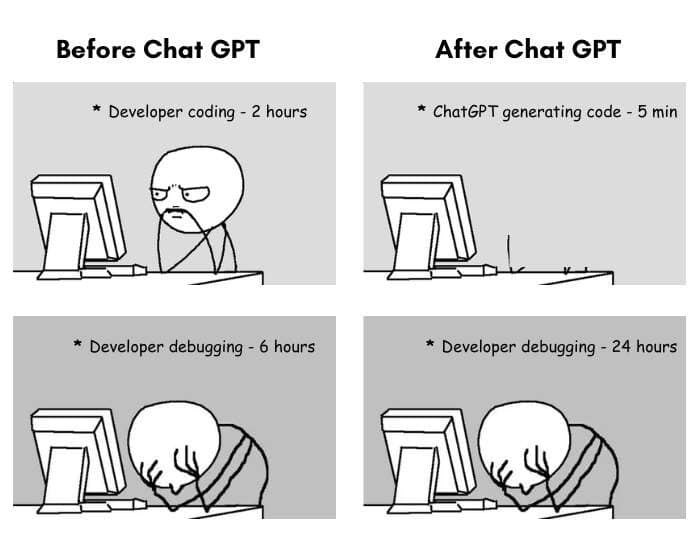

The trick is to split the code into smaller parts.

This is how I code using ChatGPT:

This works pretty well for me as long as I don't work with obscure frameworks or in large codebases.

Actually, that's the trick when writing code in general, and also how unit tests help coding an application.

I guess it’s time to become a PM and spend the rest of life drawing ugly PowerPoint slides.

As someone doing management I would kill to have ChatGPT build ugly slides for me

You'd search it anyway.

To be fair, you're also describing working with other people.

So my job (electrical engineering) has been pretty stagnant recently (just launched a product, no V2 on the horizon yet), so I've taken my free time to brush up on my skills.

I asked my friend (an EE at Apple) what are some skills that I should acquire to stay relevant. He suggested three things: FPGAs, machine learning, and cloud computing. So far, I've made some inroads on FPGAs.

But I keep hearing about people unironically using chatGPT in professional/productive environments. In your opinion, is it a fun tool for the lazy, or a tool that will be necessary in the future? Will employers in the future be expecting fluency with it?

Right now it's a good but limited tool if you know how to use it. But it can't really do anything a professional in a given field can't do already. Alhough it may be a bit quicker at certain task there is always a risk of errors sneaking in that can become a headache later.

So right now I don't think it's a necessary tool. In the future I think it will become necessary, but at that point I don't think it will require much skill to use anymore as it will be much better at both understanding and actually accomplishing what you want. Right now the skill in using GPT4 is mostly in being able to work around it's limitations.

Speculation time!

I don't think the point where it will be both necessary and easy to use will be far of tbh. I'm not talking about AGI or anything close to that, but I think all that is necessary for it to reach that point is a version of GPT4 that is consistent over long code generation, is able to better plan out it's work and then follow that plan for a long time.

That's like asking in the early 90's if knowing how to use a search engine will be a required skill.

Without a doubt. Just don't rely on it for your own professional knowledge, use it to get the busywork done and automate where you can. I have virtually replaced my search engine needs with Bing AI when troubleshooting at work because it can find PDF manuals for obscure network hardware faster than I can shift through the first five pages of a Google search. It's also one of those things where the skill of the operator can change the output from garbage to gold. If you can't describe your problem or articulate what you want the solution to look like, then your AI is going to be just as clueless.

I don't know what the future will hold and how much of our white collar workforce will be replaced by AI in the coming decades, but our cloud and automation engineers are not only leveraging LLM models but actively programming and training in-house models on company data. Bottom rung data entry is going the way of the dodo in the next ten years for sure. Programmers will likely see the same change that translators did after translation software was developed, they moved from doing the job themselves to QA'ing the software.

Times are changing but getting onboard with using AI as well as learning how to integrate it will be the next big thing in the IT world. It's not going to replace us anytime soon but it will reduce the workforce as the years go by.

This is exactly how I use it. Just like with conversations, ChatGPT tends to lose the plot after a while. It starts to “forget” the start of the conversation, and has trouble parsing things. It’s great for the first few paragraphs then begins to drift. So only use it for a few “paragraphs” worth of code at a time.

And as always, you need to make sure that it’s not just pretending to know. It will confidently feed you incorrect information, so you need to double check it occasionally.